Quick Facts

- Category: Digital Marketing

- Published: 2026-05-05 07:06:39

- How to Mitigate Actively Exploited ConnectWise ScreenConnect and Windows Vulnerabilities

- The Surprising Dangers of Cognitive Offloading: How a Personal Knowledge Base Saves Your Skills

- How to Adapt to the Mac Mini's New Pricing Landscape

- Australia's Coal Sector Masked Methane Cuts Through Offsets and Production Decline, Report Reveals

- KernelEvolve: Inside Meta’s AI-Powered Kernel Optimization System

Introduction

When millions of people turn to Facebook Groups every day for trusted advice—whether it's diagnosing a plant problem, validating a vintage car purchase, or finding the perfect recipe—they expect to find answers quickly. Yet traditional keyword-based search often fails, leaving users frustrated by missed results, buried comments, and scattered wisdom. This guide walks you through the exact steps we took to modernize Facebook Groups Search, moving from a simple lexical system to a hybrid retrieval architecture that dramatically improves discovery, consumption, and validation of community content. By the end, you'll understand how to implement a similar system in your own platform, complete with automated evaluation to maintain quality.

What You Need

- Understanding of search architectures – familiarity with lexical (TF-IDF, BM25) and semantic (vector embeddings, transformer models) approaches.

- Access to community data – a corpus of group posts, comments, and metadata (titles, timestamps, author info).

- Infrastructure for model training and serving – GPU compute, embedding database (e.g., Pinecone, FAISS), and evaluation pipeline.

- Evaluation framework – ability to run automated tests (e.g., using ground-truth relevance judgments) and track metrics like engagement and error rate.

- Team with cross-functional skills – engineers, data scientists, and product managers focused on search quality.

Step 1: Map the Friction Points in Community Knowledge

Before building any solution, you must deeply understand the three core pain points users face:

Discovery: When Language Doesn't Match Intent

Traditional lexical systems rely on exact word matches. If a user searches for “small individual cakes with frosting” but the community calls them “cupcakes,” zero results appear. This “lost in translation” problem is the first friction point. Document these mismatches by analyzing query logs and community vocabulary.

Consumption: The Effort Tax

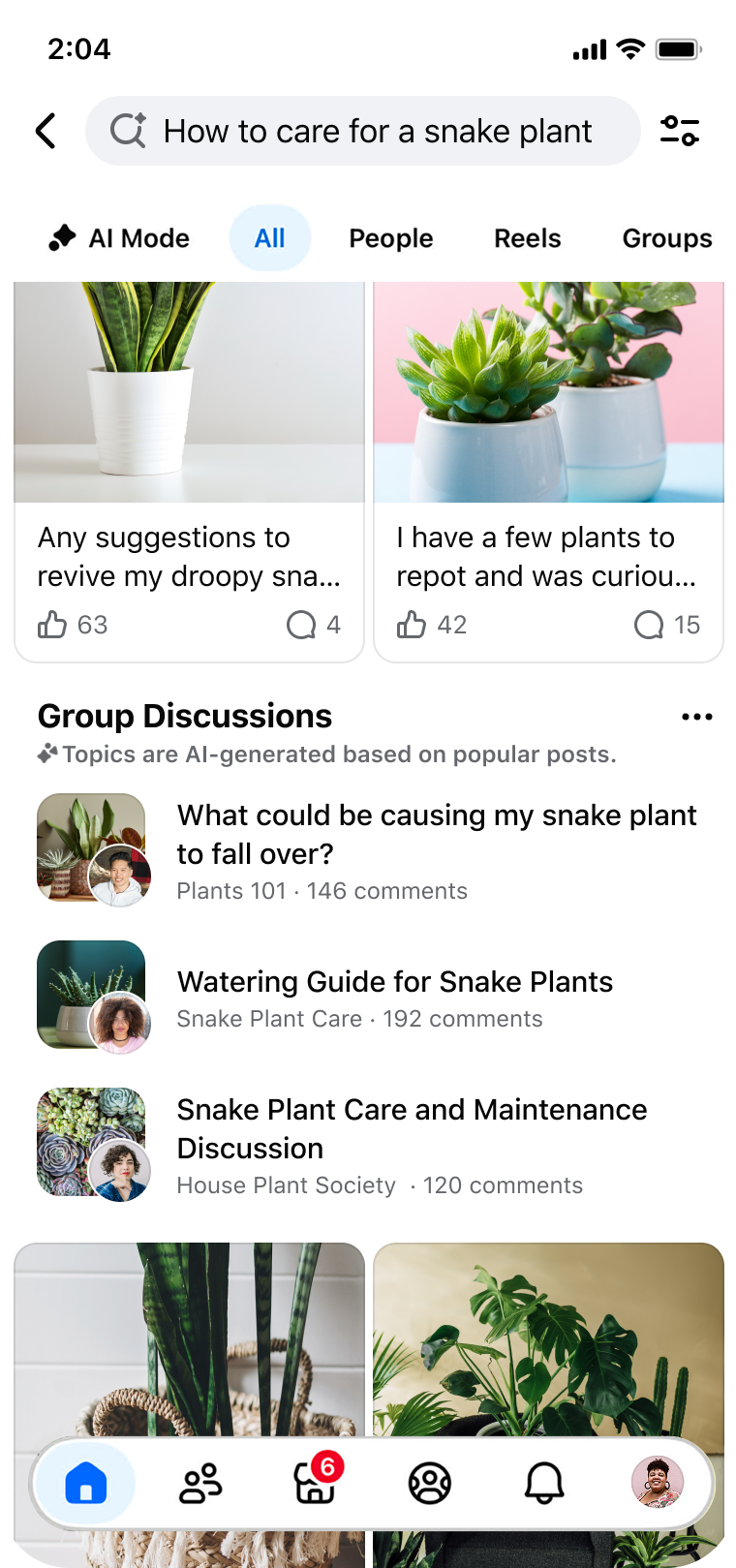

Even when users find the right post, they must scroll through dozens of comments to extract a consensus. For example, searching “tips for snake plant care” yields a thread with 50+ replies; the user must manually piece together a watering schedule. This effort tax lowers satisfaction and increases bounce rates.

Validation: Unlocking Hidden Expertise

When making high-stakes decisions—like buying a vintage Corvette on Marketplace—users want to validate their choice with trusted community knowledge. That wisdom is scattered across multiple group discussions. Your system must surface it in a consolidated, trustworthy way.

Create a quantitative map of these friction points using user surveys, session replay, and search abandonment rates. This becomes your baseline for improvement.

Step 2: Adopt a Hybrid Retrieval Architecture

The core innovation is moving away from pure lexical search to a hybrid model that combines the strengths of keyword matching and semantic understanding.

Implement Lexical (Keyword) Retrieval

Keep a BM25 index for fast exact matching—this handles queries like “snake plant watering schedule” where precise terms exist. Maintain the low-latency and high-recall benefits of traditional search.

Add Semantic (Vector) Retrieval

Train or fine-tune a dense embedding model (e.g., BERT, Sentence-BERT) to represent both queries and group posts as vectors in a high-dimensional space. Use cosine similarity to match “Italian coffee drink” with “cappuccino” even when no words overlap. Deploy a vector database (e.g., FAISS, Milvus) to index millions of group items.

Combine and Rank

Use a fusion strategy (e.g., weighted linear combination or learning-to-rank) to merge results from both systems. Tune weights based on offline metrics so that neither system dominates incorrectly. The hybrid architecture ensures you capture both exact matches and conceptual relatedness.

Step 3: Implement Automated Model-Based Evaluation

To validate that your changes actually improve user experience without increasing error rates, you need a robust evaluation pipeline.

Create Ground Truth Data

Human annotators label relevance for a diverse set of queries (e.g., 1,000 query-document pairs) covering discovery, consumption, and validation scenarios. Use inter-annotator agreement to ensure quality.

Build Automated Metric Trackers

Compute standard IR metrics: NDCG, MRR, recall@k. Also track engagement metrics like click-through rate (CTR) on search results, time spent on page, and scroll depth within threads. Most importantly, monitor error rates—any significant increase (say >0.5%) triggers a rollback.

Run Online A/B Tests

Deploy the hybrid system to a small percentage of users (e.g., 5%). Compare against the control (pure lexical) group on key metrics. Our results showed improved search engagement and relevance with no increase in error rates—proof that the evaluation worked.

Step 4: Solve the Discovery Friction with Semantic Matching

The biggest win from hybrid retrieval is overcoming lexical gaps.

Train your embedding model on a large corpus of group conversations so it learns community-specific slang, abbreviations, and synonyms. Use data augmentation: for example, create paired examples like (“Italian coffee drink”, “cappuccino”) to reinforce non-obvious matches. Over several weeks, measure how many previously zero-result queries now return relevant content. This directly addresses the “lost in translation” problem.

Step 5: Reduce the Effort Tax by Surfacing Consensus

Consumption friction requires going beyond retrieval to presentation.

Summarize Comments

Apply a lightweight extractive summarization model to the top-ranked post’s comments, pulling out key advice (e.g., “Water snake plants every 2-3 weeks, let soil dry between waterings”). Display this as a snippet in search results.

Sort by Relevance, Not Recency

Instead of chronological order, rank comments within a thread by their usefulness (number of likes, replies, or an internal relevance score). This lets users see the most helpful answers first.

Use “You Might Also Like”

Based on the user’s query and reading behavior, recommend related threads that address the same question from different angles. This preempts further searching.

Step 6: Enable Validation Through Trusted Community Signals

For high-stakes queries like product validation, the system must amplify trustworthy voices.

Surface Expert Comments

Identify group members who have a track record of high-quality contributions (e.g., high reputation scores, verified specialists). Boost their comments in the search results for validation queries.

Contextualize Advice

When a user searches “vintage Corvette buying tips,” show a card with top-voted advice from multiple group discussions, plus links to the original threads. This “wisdom of the crowd” approach helps the user make an informed decision.

Provide a “Validate This” Button

Next to each result, allow users to upvote or flag the advice. This feedback loop improves future validation answers and builds community trust.

Tips for Success

- Iterate with real users. Launch early with a small group and gather qualitative feedback—metrics alone won't capture nuance.

- Monitor latency. Hybrid systems can be slower. Cache frequent queries and use approximate nearest neighbor search to keep response times under 200ms.

- Continuously retrain embeddings. Community language evolves (e.g., new memes or product names). Schedule monthly model updates.

- Handle privacy carefully. Ensure embeddings don't leak sensitive text. Use differential privacy techniques if needed.

- Don't ignore error cases. Even a 0.1% increase in false positives can erode trust. Run manual spot-checks weekly.

- Document your architecture. As your team grows, clear documentation of the hybrid retrieval pipeline and evaluation framework will save countless hours.

By following these steps, you can transform a basic group search into a powerful community knowledge engine—one that truly understands users' intent, surfaces the right answers, and builds confidence in decisions. The result: higher engagement, happier communities, and the full power of shared wisdom unlocked.